Image Texture Caching

Over the past year I’ve been working on (and to a much greater extent optimising) an image texture cache system for Imagine. Imagine has had some form of reading planar (entire) images since very early on, but other than a slightly hacky integration of OpenImageIO (but that did work efficiently) into Imagine just to test things, it hasn’t had proper integrated support for reading (and more importantly paging) partial images lazily on-demand. This ability is essential for a production level renderer in VFX, as the size and number of image textures used in VFX for rendering is pretty extreme.

I could have just integrated OpenImageIO properly into Imagine, but there were a few reasons I didn’t want to do this: First and foremost, I wanted to write my own and experiment with different ways of handling concurrency, scalability as well as eviction. Second, I already had my own file readers / writers and didn’t like some of OIIO’s dependencies. Further reasons were that OIIO comes with a fair bit of baggage which at least in my use-case for texture reading, I didn’t care that much about: in particular rather bloated data structures in some cases (partly due to Field3D support requiring matrices). It also had some limitations: didn’t support constant tiles, although in fairness there aren’t any open file formats that support this, although I’d like to investigate creating a file format at some point - which can make a huge difference in terms of File I/O bandwidth (which is a very important bottleneck for high-end VFX rendering), it doesn’t natively support texture atlassing (e.g. UDIMs) and I was also not completely convinced by its efficiency or scalability, although it definitely is a capable and production-proven library. I also wanted to experiment with adding new features like on-the-fly compression of tile data, and doing this in a clean and minimal code-base would be much easier.

Imagine already had file readers, but they only supported reading planar images into image buffer classes, so the first step was to provide the ability for a file reader to describe the metadata of an image file, and fill in the details, including resolution, number of channels, data type and mipmap levels.

In terms of general storage of image textures, I created something that’s essentially similar to OpenImageIO’s overall design, whereby there are two main items stored in the cache: image items, which represent the actual image file metadata, and image tiles, which represent the pixel data of the images themselves, broken into small tiles. However I wanted to try slightly different algorithms to what OpenImageIO uses for tile eviction, as I had the suspicion the method it uses (mark and sweep iterator over entire tile cache) wasn’t the best approach from a speed and scalability perspective. One of the ideas I had in mind was not actually removing any tile items at all at eviction time, but only freeing the pixel data itself. This would use more overall infrastructure memory (excluding pixel data), but it should reduce the need to lock the entire tile cache very slightly, which hopefully would help scalability and performance. Given that classes and structs representing texture items and texture tiles would never be freed at tile eviction for paging, it was therefore extremely important that they be as memory efficient as possible.

I made a conscious decision to not separate different channel images based on tiles requested based on the channels requested - that is, if an RGBA image was loaded, but only the A channel was requested, I’d still load all four channels into memory instead of partitioning each tile and having an extra dimension to have to cope with for the tile hashes. This was partly to keep things simpler, but also to constrain the number of tiles given I wanted to not have to free the tile items at all when paging, only freeing the pixel data. It would mean however, that in certain situations like the example given above, memory usage would be higher than needed. However, as is generally done in high-end VFX, the solution here is to make sure each texture image just contains one set of channels, so the above example would have two textures, one for the RGB channels and a separate one for the A channel.

I also made a decision to not use lazy texture loading for HDRI environment maps, but to keep those separate, partly because you’re effectively point-sampling them anyway (definitely at construction time to build the CDFs), but also to keep texture requests down for this type of illumination. I also wanted to be able to categorise images based on purpose, both for allowing different caching / eviction strategies for different types and to allow different types of filtering to be done on them: there are some situations like for alpha/presence maps and layering mask/mix maps that it’s generally better to err on the side of less filtering, even at the expense of possible aliasing.

To begin with I started with just the bare basics as I wanted to investigate and experiment with different ways of doing things to see the effects they would have. As a starting point I initially just had a single mutex around each map collection, so that I could see what the extreme worst-case scenario was.

Imagine was using ray differentials to calculate texture filter regions and thus the mipmap levels to pull in of the textures, and doing trilinear filtering to combine mipmap levels.

Tests even with just a single plane polygon in the scene with one texture were as expected pretty abysmal, with CPU utilisation on a 16 core / 2 socket system hovering around 300% (instead of the ideal of 1600%).

I implemented per-thread microcaches that stored LRU arrays of pairs of both the texture items and their hashes and tile items and their hashes, which was used to lookup textures before going to the main cache and in this contrived scenario, other than for the first few buckets where all threads were initially opening file and reading images, CPU utilisation went up to the expected 1600%, fully utilising the available cores.

Testing with other simple objects added to the scene with the same texture (so that there were now indirect rays being fired in an incoherent fashion) could scale to a degree if I increased the microcache sizes, but this wasn’t going to be a good approach, as the single mutexes around the individual map containers were clearly the bottleneck.

So I needed to come up with a solution which would scale well with many threads. In the end I settled on writing my own map container wrapper similar to Java’s ConcurrentHashMap (which is also similar to what OIIO uses, and how Linux’s hashed spinlocks work), which splits the map into different “bins” (although I called my version “shards” which is more in mapping to the database partitioning technique). Each “shard” contains its own isolated map and mutex, and the shard to use for lookup and inserting values is chosen by using the modulus of the hash value for the key, based on the number of shards. It’s then possible to lock just this shard and lookup within its individual map to find / set the value as required, while other concurrent requests from other threads will have no contention, so long as they map to different shards. If they do map to the same shard, then there will be a certain amount of contention. So it’s extremely important to have a very good hash function which distributes well over the domain space. In my case, after an awful lot of trial and error and playing around with the avalanche effect of hashes for tiles, I came up with hashes which seem to work quite well: I used Austin Appleby’s MurmurHash3 for hashing the filename, generating a 32-bit integer, which allows lookups of the texture item itself.

For tile items, I ended up using a 64-bit integer of the 32-bit texture item hash shifted 32-bits to the left, being added to by the mipmap level shifted 24-bits to the left, then the tileY coordinate shifted 14-bits to the left with the final tileX coordinate unshifted.

Another very important factor which was important is mixing the hash used to lookup the shard so that it doesn’t have the same correlation within the shard’s hash map, which can severely affect the efficiency and load factor of hash maps within each shard.

With this implementation, scaleability was perfect on 16 threads with these simple scenes, so I then attempted to stress-test it with more complex test scenes and finally as close to a production level scene as I could fabricate texture-wise.

I tested initial scalability of the locking by using “virtual” image texture readers which just generated texture colours procedurally (tiled based) so as not to be limited by disk speed or OS-level disk caching.

Tests with the extreme worse case scenario (just a single mutex around the containers - and using the less-than-ideal std::map<> to begin with for lookup structures) were understandably atrociously bad, although interestingly scaling on Linux was much better than on OS X, probably due to Linux’s Futexes.

Scalability with sharded maps was much better on OS X, and noticeably better on Linux, scaling close to linearly with 32 shards for both maps. Changing the underlying map type to std::unordered_map (hashmap) reduced lookup time by around 25%, which was not as much as I had hoped. I experimented with setting the initial bucket count and max load factor for the maps and this reduced lookup time by around 10% again, and at this point I was slightly worried that my hashes weren’t as well distributed as I thought - so I thought this would be a good time to both check the distribution of the hashmaps and see if the strategy of not deleting items from the maps gave any benefit. Without controlling the load factor and initial bucket count, once paging started happening, not deleting items seemed to give a slight speedup - possibly due to the fact tombstones didn’t need to be used to mark items as deleted. However when optimising the initial load factor and bucket count, the difference was negligible - probably due to hashes mapping very nicely to open buckets with very few collisions happening. However the ability to not delete tile items did mean that in the texture stats I was able to very accurately track unique texture data read during paging, which is quite useful, and I kept this as an option.

I experimented at this point with increasing the microcache sizes a bit (to 16 entries per thread for both), and for simple scenes with less than 60 textures this made a noticeable difference (especially with paging enabled, as it meant if an item was in a microcache it was recently used so it shouldn’t be evicted), but once each ray hit was evaluating more than 10 textures for layered materials, these microcaches became barely useful due to almost random thrashing between vertices per path. I have some ideas for trying to use more tree-like data structures for them in order to take advantage of ray-tree coherence, but the best approach here would just be full-on deferred ray batching / sorting, so I’m not convinced it’s going to be worth it.

At this point without having to page textures to fit in a particular memory limit, my texture cache was faster than OpenImageIO for pretty much identical numbers of texture evaluations, but with aggressive paging turned on OpenImageIO was noticeably faster. Looking at the stats between the two, it was obvious that my naive eviction method was causing an awful lot of duplicate reads (still using virtual file readers, so no disk access was taking place, only memory allocations and procedural textures). I decided to just copy OpenImageIO’s clever method of marking a tile as recently used with an atomic variable, allowing a very cheap compare-and-swap to be done, allowing skipping recently-used tiles very accurately and efficiently, although with a slightly less cumbersome mark-and-sweep process. This change made a huge amount of difference, with my texture cache now being ~5-10% faster than OpenImageIO. I also experimented with over-evicting based on a ratio to prevent continual locking: if a request for a new image needed 2KB of data, I’d actually free more than that, so as to do more work within the one lock event meaning it would be more likely the next request for a new texture would not need to lock as well to evict - this made a noticeable improvement (after experimentation I settled on doubling the target eviction size).

I then decided to move to proper tiled based image textures, testing both TIFF (briefly) and OpenEXR. I noticed immediately with OpenEXR that using the worker thread calling OpenEXR to read images (i.e. with the threadpool size set to 0) had severe contention issues, caused by redundant locking in IlmBase’s ThreadPool class. Larry Gritz had also spotted this issue previously and had a fix for it on GitHub which allowed EXR reading with worker threads to scale a lot better. Along the way of testing with bigger and bigger scenes I had to fix several issues with ray differentials not propagating correctly causing incorrect point-sampling of the lowest level mipmaps, which obviously slows things down to a halt. In the end for some edge cases I had to build in texture filtering based on approximate ray-width as a backup for when ray differentials failed (due to incorrect/inconsistent UVs or missing UVs on meshes).

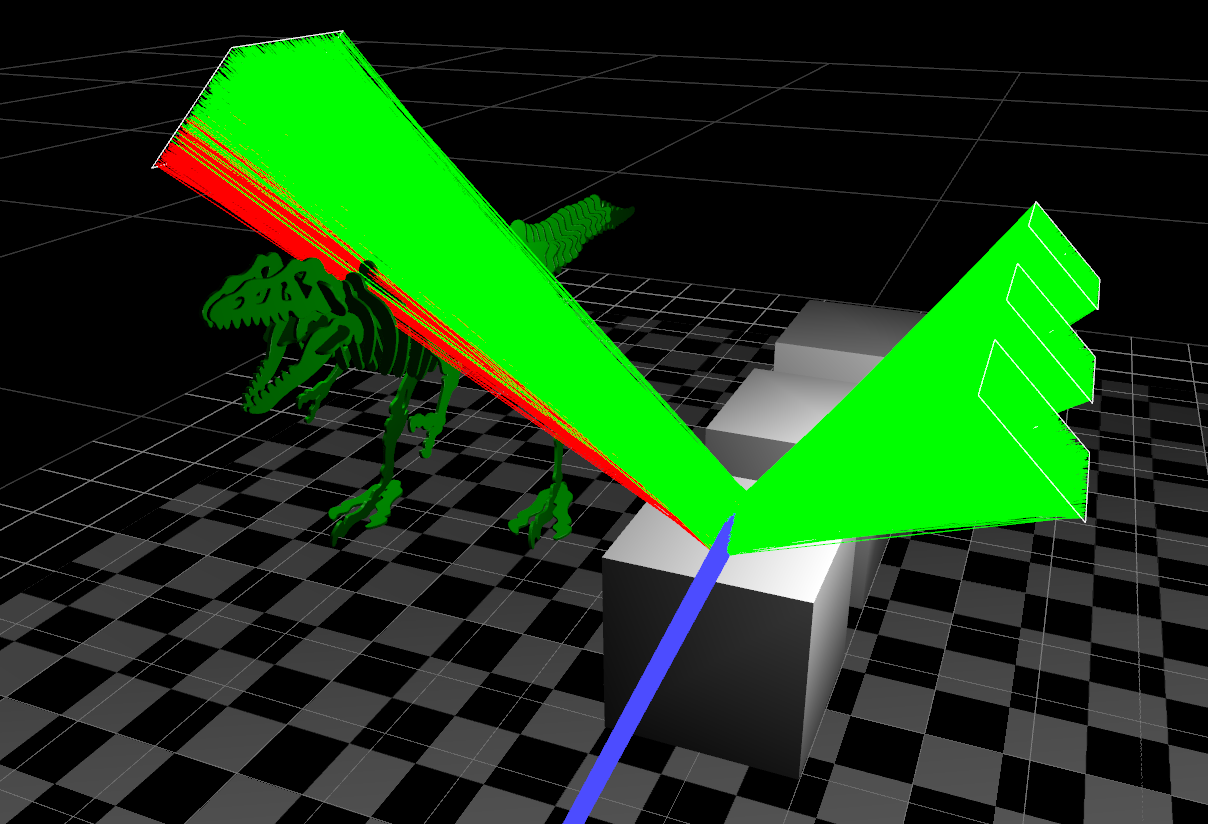

I then decided to scale things up to an extreme test to stress test the cache: I tested with a large cornell-box style scene containing four production-scale hero objects - many different components with different materials, all with UDIMed textures with varying numbers of layers (one to three, controlled with mask textures controlling mixes), with diffuse, specular colour, specular roughness, clearcoat reflection and bump textures being utilised for most (but not all) materials. The floor and the walls of the Cornell box also had diffuse textures of 10x10 tiles of UDIMs (so each plane other than the ceiling consisted of 100 texture files).

The total number of image texture files was 898, and I made use of OpenEXR format tiled mipmaps of 16-bit half format at 8K, the tile size being 32x32. Total size on disk for all textures was over 320 GB.

I tested with path length set to 6, so there would be a large amount of incoherent texture accesses.

Testing this scene showed it worked very well, and was consistently slightly faster than OIIO - this is probably partly due to less locking that I do in general, but probably also because my texture cache has full integrated support for UDIMs, so can batch up requests to adjacent tiles on UDIM borders and when filtering to reduce locking even more.

I experimented with adding support for compressing pixel tile data in memory using LZ4 - the idea being that for constant tiles (which no open standard tiled file format supports at present, so there’s no way to detect them up front) it might be a way to detect these on-the-fly with a tiny bit of overhead. Testing with simple textures worked well, and if the compression ratio was excellent it was obvious it was a constant tile, and I could mark it as such and not bother evicting it, which brought a slight speed-up. If the compression ratio was just good, there was some constant data in the tile, and it meant I could fit more in memory without going back to disk when paging. However, with real-world textures painted in Mari it didn’t work as well, as outside the UV’d area Mari tends to distort texture detail instead of leaving it black, so there’s still texture detail there taking up space. One situation where compressing did still provide benefits with real-world textures was with layer masks texture maps used for mixing / isolation, which are generally less detailed anyway - it was possible to often detect constant tiles and even if there weren’t entire constant tiles still compress image data usefully within tiles.

So I now have a very fast, efficient and scalable image texture cache - I still think there could be better texture formats than OpenEXR which unfortunately has become the standard for textures at VFX level but is seemingly somewhat abandoned: OpenEXR’s threading is really bad, and the use of threadpools doesn’t really make sense for reading multiple random tiles per random mipmap level in parallel in a path tracer - it’s possible with coherent access using threadpools is still a win for rendering (it definitely does make sense to use threadpools to speed up reading a single large entire image for use in image viewers and compositors, and for writing images), but I think it makes sense that a file format for rendering be completely stateless, with metadata decoded once, and then any number of threads be able to read/uncompress at will without dependencies / state controls - obviously depending on how the image is compressed there may be problems here, but a balance needs to be found. In addition OpenEXR doesn’t support 8-bit or 16-bit integer formats which can be very efficient for certain types of data (masks / isolation maps), neither does it support constant tiles or instanced tiles. So I’m tempted to try creating a texture format just for rendering, optimised for extremely fast random access.