Basic Apple M2 Pro CPU Benchmarks

This month I bought and received a new Apple MacBook Pro (M2 Pro, 14-inch, 2023) with the aim of replacing my own Apple MacBook Pro 15-inch (Intel) 2015 model which I bought in 2016. The MacBook Pro 15 still does work, but the battery life is awful now (I could have it replaced, which I’ve done several times with laptops in the past), the internal fans barely work, and the rubber around the screen is disintegrating, so with a trip back to the Northern Hemisphere planned next month, I thought it was time for a replacement.

A year ago I was provided (on loan) a work-provided MacBook Pro 14 M1 Pro (see previous benchmarks) which I’ve been using a bit, so I knew mostly what to expect in terms of performance and from the laptop in general, but I was curious to compare the performance of the M2 Pro against the M1 Pro (and the old Intel machine).

It’s not going to be a completely fair apples-to-apples comparison, as the work-provided MacBook Pro 14 M1 Pro processor is the 10-core version which has two extra performance cores than the baseline did - having eight performance cores and two efficiency cores - and the M2 Pro I’ve just bought is the baseline model - with six performance cores and four efficiency cores - but it should provide a rough indication of what performance to expect.

The Xcode / Apple Clang compiler versions are also different: My MacBook Pro 15 (Intel) is still running quite an old MacOS version, with an older compiler which I don’t want to update, and while I did install Xcode 14.3 on the 2021 MacBook Pro M1 Pro (as well as the command line tools) in order to attempt to match what I’d just installed on my new 2023 MacBook Pro M2 Pro, clang --version still shows version 13.1.6, whereas my new 2023 M2 Pro MacBook Pro shows 14.0.3 being used, so I’m not really sure what’s going on there, as Xcode -> About Xcode shows Version 14.3.1 as I’d expect on both MacBook Pro 14 machines (and both have the command line tools for that version of Xcode installed).

The MacBook Pro 14s are both running MacOS Ventura 13.4.

Tests:

Copying what I did in the test last year, I’ll be using two of my apps as benchmarks: my Mint interpreter language VM (originally based off Robert Nystrom’s excellent Crafting Interpreters Lox language tutorial) but with additional functionality and performance improvements, which I’ll use to benchmark two Mint scripts as single-threaded tests, and also my Imagine pathtracing renderer, which has native SSE intrinsics support for Intel and native Neon intrinsics support for ARM, which I’ll run in both single- and multi-threaded scenarios.

Both apps will be compiled with -march=native on the Intel side and -mcpu=native on the Apple Silicon / ARM side, using the clang version on the machine in question, as well as optimisation level: -O3.

The two Mint script tests will be loop value calculation as Test 1:

var a = 1;

for (var i = 0; i < 100000000; i += 1)

{

a = (i + i + 3 * 2 + i + 1 - 0.42) / a;

}

print a;

and a variation of Project Euler 21 to calculate the sum of all Amicable numbers under 15,000 as Test 2.

The Imagine rendering tests will render three different scenes in both single- and multi-threaded mode.

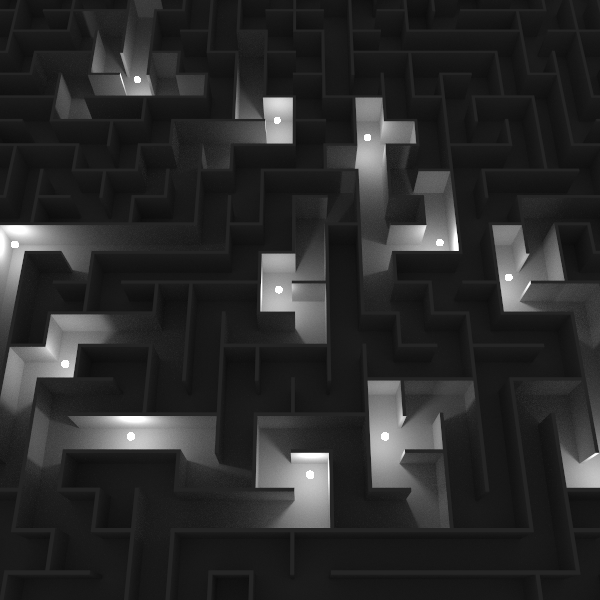

The first render will be the same maze scene with spherical area lights that I used in the test last year (example image above), but with different settings: resolution will be 256 x 256, 256 samples-per-pixel will be used, but this time only one next-event light sample will be taken each path vertex. The general ray traversal and ray intersection will utilise SIMD, but the (fairly expensive) perfect light sphere sampling is scalar, and very unlikely to be vectorised by the compilers themselves.

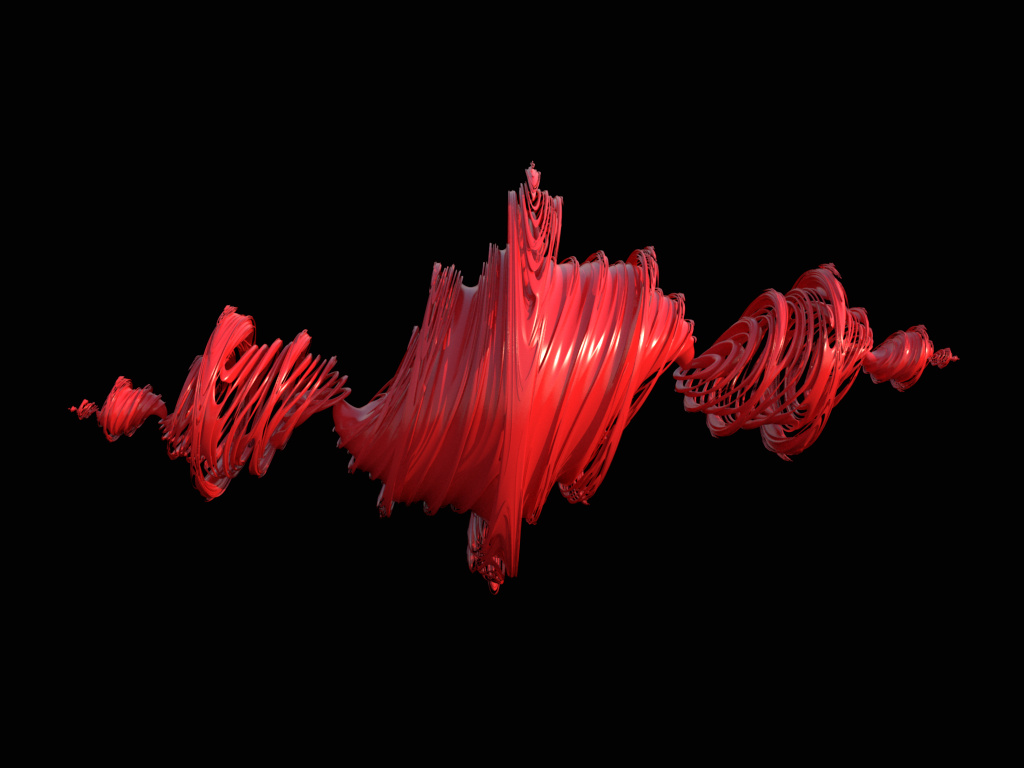

The second render will be a 450 x 338 resolution render of a Signed Distance Field primitive of a Julia Fractal (example image above, although with different settings), which is quite expensive to evaluate, and also does not have any SIMD utilisation for the SDF evaluation / intersection. There’s a physical Sky IBL in the scene as a light, and 144 samples-per-pixel will be used, with 3x3 Blackman Harris pixel filtering being used.

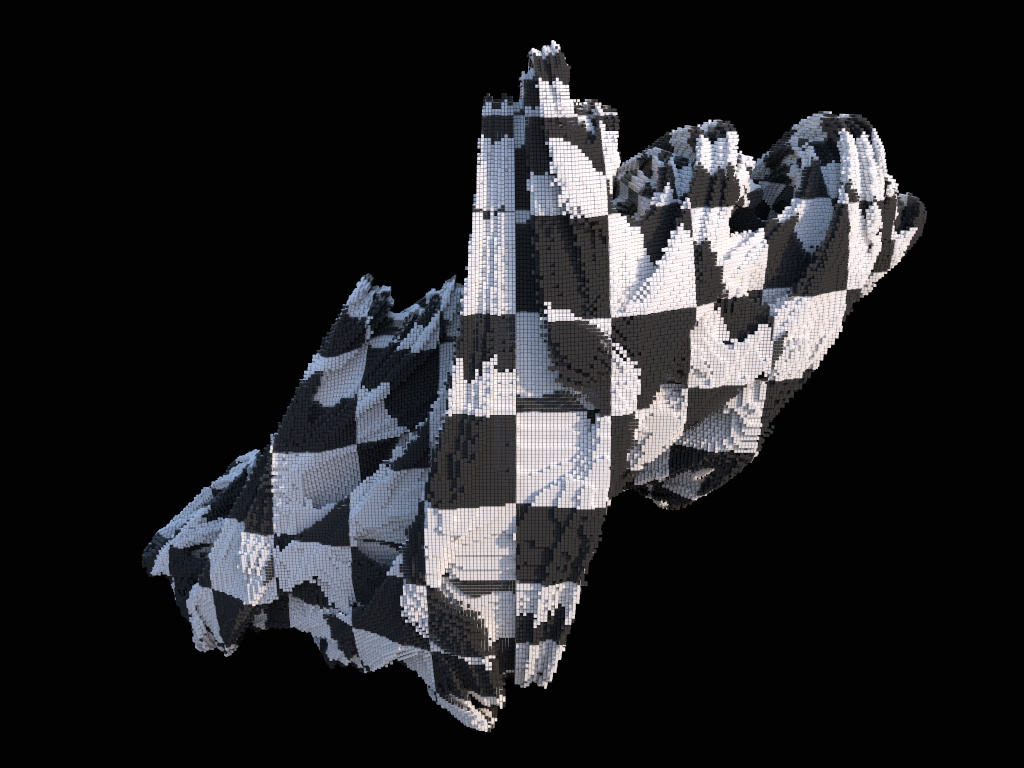

The third render will be a 450 x 338 resolution render of 2,326,299 instanced mesh cubes in the (pre-calculated) shape of a Julia Fractal, which will fully-utilise SIMD instructions for ray traversal in the BVH and for ray / primitive intersections. Again, there’s a physical Sky IBL in the scene, 144 samples-per-pixel will be used, but this time no pixel reconstruction filtering will be used (so effectively Box 1x1).

These tests will all be done on (close to fully-charged) battery power - I discovered in the tests last year that Apple doesn’t seem to down-clock on battery power - and I will also wait between test runs for the processor temperatures to be below 50 degC before running the next test, to try and reduce the impact of thermal throttling.

All tests will be run three times, and results below will show the mean average of those numbers.

Results

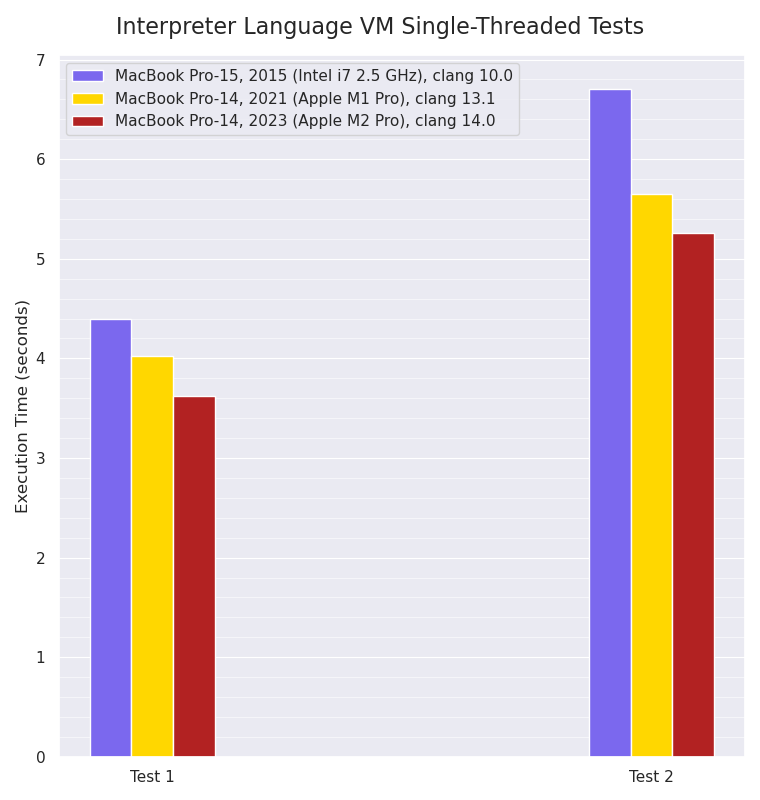

Single-threaded Mint VM interpreter:

Single-threaded Mint interpreter VM benchmarks, smaller values are better:

For these single-threaded tests, the M2 Pro has a small improvement over the M1 Pro’s performance, which itself is around 10-16% faster than the eight year old Intel i7 processor. As mentioned last year however, I think this is very likely because the Mint VM execution is often branch-prediction constrained within the main VM bytecode interpreter loop, so there’s a limit to the amount of Instruction-Level Parallelism that’s achievable from the eight-wide M1 Pro and M2 Pro.

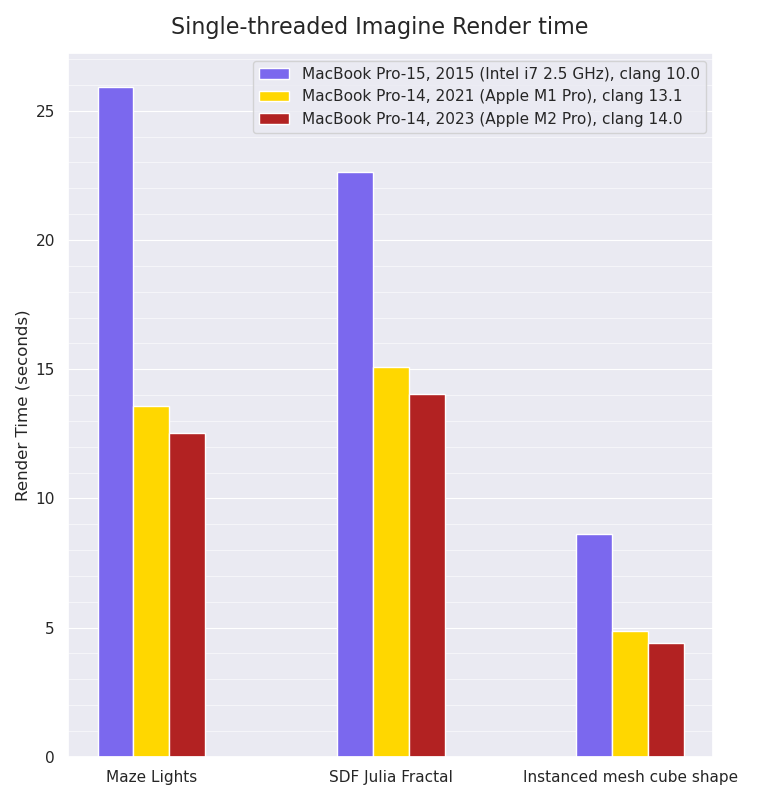

Single-threaded Imagine rendering:

Single-threaded Imagine rendering benchmarks, smaller values are better:

The single-threaded rendering tests, which have a lot more floating point calculations and SIMD usage, show a small performance improvement for the M2 Pro over the M1 Pro. Intriguingly, the Maze Lights scene is the test with the biggest performance increase (almost 2x faster) from the Intel machine to the Apple M1 Pro: the other tests show slightly smaller gains, which I wouldn’t have expected. Without further microbenchmarks of various isolated parts of those tests, it’s difficult to guess why that might be, but the different render tests do exercise different calculations and code paths.

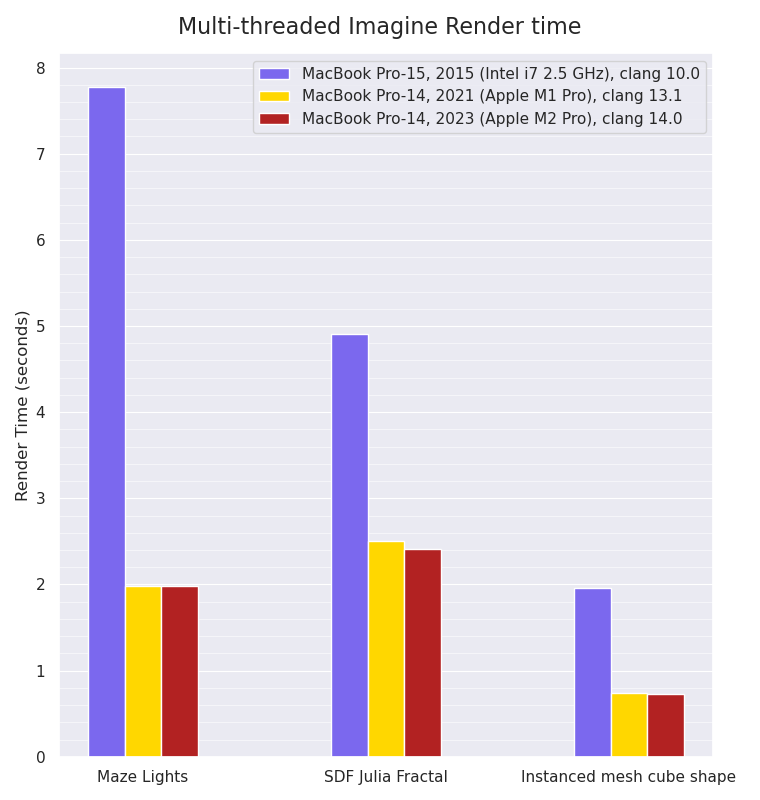

Multi-threaded Imagine rendering:

Multi-threaded Imagine rendering benchmarks, smaller values are better:

The multi-threaded rendering tests show that due to the fact the M1 Pro machine has eight performance cores and two efficiency cores, whilst the M2 Pro

machine only has six performance cores and four efficiency cores, the M2 Pro machine is only very slightly faster in the SDF Julia Fractal render scene than the M1 Pro machine, and ties in the other two tests. Once again, the Maze Lights scene shows the biggest performance increase - almost 4x faster - from the Intel CPU to the Apple Silicon ones, with the other two tests showing a less dramatic difference (between 2x to 3x faster).

Conclusion

I think a not too bad showing for the baseline model: the M2 Pro can beat the M1 Pro by a small margin in all single-threaded tests, and can either just about equal or slightly beat the M1 Pro which has more performance cores (but two less efficiency cores) in the multi-threaded tests.